One of the most exciting movements in learning, in my view, is personalization. In my company, we are beginning to see major projects that involve creating a personalized experience through learning portals and the interplay of communities with online academies. How does this affect the instructional design and development process? I’d like to offer you some of my own lessons learned in this new arena. But first, let’s consider two important issues that affect your personalization efforts.

First issue: big data

Creating a complete learning ecosystem where learning journeys provide relevant and focused content, while flexing to meet changing business priorities, is one of the most satisfying aspects of working in learning today. It’s what anyone who has worked in learning and knowledge over the past decade or so knows has been around the corner, but it is now a reality. However, as the possibilities grow, so does the data. Big data changes the conversations that L&D has with the business. It opens the door to discussions at the top table—there’s so much that can be measured, sliced, diced, and reported upon.

What this can mean in practice, however, is that L&D, HR, IT, and the board can become paralyzed by the seeming complexity of what is needed. What do we do with all the data? Where does the data reside and what does it look like? On a practical level, the learning record store provides an ideal conduit between enterprise resource planning-level HR and LMS systems, customer relationship management, collaboration tools, intranets, and more, but trying to get all the stars aligned causes one tremendous headache.

Second issue: competency models

Let’s look at this scenario.

You’ve been asked to deliver learning portals that provide a personalized view into “My Learning World.” That is, into what an individual needs in order to:

- Progress their career

- Deliver against their objectives

- Align to the business strategy of the organization

- Have opportunities for talent development

- Receive content that matches personal likes and excludes personal dislikes

- Feel incentivized and rewarded

And, by the way, every piece of content and each interaction must be top quality.

Let me ask you a question. Do you have a global competency model that is entirely consistent across the whole company, fully understood, and used from day-one recruitment through an individual’s entire career? Chances are you don’t and frankly won’t; at least not at a level that ensures every time an individual logs into their learning portal it is entirely up to date and relevant to what needs to be delivered and the learning that’s needed.

But let’s face it, although there are organizations out there with very well adopted and scoped competency models, it’s only a small piece of the whole story. It just happens to be a chapter in that story that is great for creating data. But the pace of corporate life today means we have to be very careful about what we commit to—the minute that model drives out-of-date content to employees all credibility is lost. (Perhaps because their HR records have not been kept entirely up to date—outrageous I know but it happens! Or a major shift or opportunity faces our business to which we need to move rapidly.) This personalized learning experience doesn't really know employees after all so they are back to finding the insights, advice, and tools they really need elsewhere; opportunity and time are lost.

Finding the path

So I prefer to start projects differently. Embedding evaluation into the ecosystem design and the implementation of that design is essential. A great way to do this with true insight and depth is to work intensely with some well-chosen core business areas in an organization, rather than an instant overall adoption by the entire enterprise. Let me explain how that works.

I spoke on a webinar recently about my preferred launch method, viral adoption. It avoids the tiresome “next big thing,” instead driving buy in that is built on a solid, tangible foundation. It’s easier for learning strategists, designers, and facilitators to build content that meets real needs, and it reduces resistance to change from employees and management alike.

Viral adoption means we can launch with some great core content, facilitate great conversations, support blossoming communities, and get those stories from the front line where the learning is having real impact. Learning paths create the scaffold—meaning that as learners complete formal learning, engage in workplace activities, access performance support content, and participate in conversations, the learning portal really gets to know each individual. The portal can evolve and grow based on real activity, on what really matters.

Another typical scenario is where a time-bound business imperative requires rapid upskilling and collaborative problem solving between colleagues to uncover new insights. This presents a real need with real business measures attached, providing a clear method for measuring success of the interventions. Engagement with formal learning experiences, with conversations created, and with shared resources and measures of community health, provides insights that help map how successful the learning content and facilitation have been in delivering key business goals. Again, a viral approach succeeds because these learning programs emerge as needed, and its significance to the business helps drive adoption while it provides great data for use by management.

The result is that the data we can get is truly valuable, truly insightful. We may start with page impressions, content ranking and recommendations, numbers of posts, etc., but this really only tells us if we got the design right and our internal communications campaign worked.

What we want is to understand how our learning ecosystem is transforming our organization and delivering on our strategy. For this, we have to look beyond typical measures. What often happens when online learning is at the heart of the solution is that we look to visitor numbers, posts on discussions, cost saved, scores on knowledge checks, amount of content consumed. This is understandable as we want to see if what we have created is actually being used. But ROI isn’t about how often learners visit the site, how many points they’ve earned on the leader board, or how much stuff they have posted.

Value chain analysis keeps interventions on target

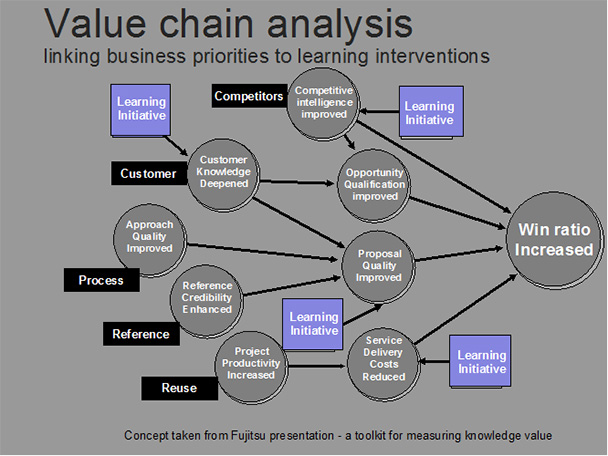

What matters is how impactful this behavior is on day-to-day working life and the lives of colleagues in delivering their objectives. It means the way we evaluate learning interventions needs to start with the change we are trying to instill and with ensuring that the learning ecosystem delivers exactly the business results intended. A popular model we use with our customers is value-chain analysis, ensuring the learning interventions deployed at each stage of the ecosystem are correctly targeted at the company objectives.

This illustration of value chain analysis, borrowed from Fujitsu, uses the sales team objective of increasing the win ratio (Figure 1). Each intervention will be aiming for a specific outcome, for which there will be assumptions. Interventions must be measured against the intended outcome. The better the match between assumptions and actual conditions, and between intended outcomes and actual results, the more effective the intervention.

Figure 1: Value

chain analysis

This is a somewhat different approach compared to the traditional Kirkpatrick model, which is still needed in the right places, but value-chain analysis does a great job at getting to what truly matters.

Using this approach enables us not only to build learning portals that provide the right combination of performance support, collaborative learning, and career management but ensures that we can map the quality of each of the resources at each level against real business outcomes.