If employees self-select to attend training, how can we make it compelling enough that they will want to participate and, better yet, share it with their peers?

This year, we designed and developed a program for supplier managers, Googlers who partner with third parties to get work done. Studies show that once a partner signs a contract with Google there is significant value leakage in cost, quality, and ease. (See References at the end of this article for study citation.) Thoughtful performance management can help minimize this value leakage. We want to have positive, enjoyable partnerships with our suppliers while making sure they continue to deliver quality and value to Google. Effective supplier managers know how to measure performance, manage costs, mitigate risks, and ensure contract compliance as well as have positive supplier relationships.

There are thousands of supplier managers at Google, though this is not a primary job function for most of them. We needed to create a pragmatic and scalable solution that quickly shared best-known practices with any Googler who wanted to learn more about managing supplier relationships. To ensure we achieved our goal of preventing value leakage, we centered our design on business outcomes and the behaviors that would support those outcomes.

We focused on proven tools and directional improvement in business outcomes. This helped us to create a comprehensive training with robust ongoing support that uses scalable media for foundational knowledge and follows through with personalized support on the job. We integrated the training evaluation design with the learning program, and included a pre-survey, community of practice, and on-demand consultation. These supports create an effective way for us to measure impact through continuous data collection.

This approach provides our learners with customized, one-on-one support, while allowing us to measure the frequency and quality with which Google employees (“Googlers”) deploy methods covered in the training.

Behavior-centered program design, not Behaviorism

We avoid training behavior with rewards and punishments. We prefer to tap into intrinsic rewards that motivate behavior change. We do this through shared business goals and values as well as examples of excellence. We train on foundational methods, encouraging our audience to self-assess and apply the tools as they think best fit the situations in their job. Then, we support them in making adjustments.

Our subject matter expert agreed to focus on specific skills, incidents, and impact on business to inform our design rather than rely on a content-centric or policy-focused approach. We worked together through the entire design process in sessions where we cascaded business outcomes, learning objectives, activities, assessments, evaluation, delivery methods and, finally, content (storyboards). We chose to combine stepped and case-study instructional models that both integrate our evaluation plan and extend into our post-training supports.

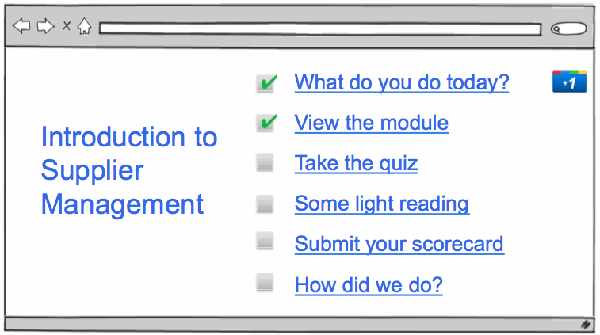

Using the “best tool” to create a learning object for each step in this program design could result in a disconnected experience for learners. One of the challenges with mixing Google and third party creative tools was delivering a coherent experience. We created a cohesive end-to-end user experience by arranging the program on a single path. (Figure 1.) We have an internally developed curation tool where we are able to combine multiple URLs into a single package.

Figure 1. The Introduction to Supplier Management program is on a single path, in order to provide a coherent experience for learners.

Because we chose easy-to-use Google tools and a relatively simple approach to the look and feel of the training, we had very low hard costs (e.g. development software, artwork licensing); and we found that we had below-average soft costs (e.g. design and development time). This helps us achieve good return on investment while we create flexible programs that are easy to change on the fly without expert technical knowledge.

Setting the stage for a Googley learning experience

Tone, quality, and usefulness are critical to the success of any training at Google. Our culture is highly collaborative and grassroots and Google employees self-select into most of our trainings. To have an impact, our training teams must create fun, relevant experiences that Googlers want to share with each other. This is no small feat!

Our audience accepts and expects humor, variety, fun, efficiency, quality, practical value, and flexible formats in training. Although we do not see evidence that designing for visual, auditory, or kinesthetic learning style preferences lead to improved outcomes, we strongly believe in using multiple media formats appropriate to each piece of content, snappy pacing, meaningful interactivity, and light-hearted tone to encourage interest and, therefore, consumption. In other words, we view active, positive attention as a prerequisite of knowledge transfer.

We designed for content quality using concise learning objectives, which helped the pacing, relevance, and credibility of our content. We also featured unscripted Googlers in our instructional and case study sections that exemplified not only excellence in supplier management but who also spoke directly to the concerns of our audience from a shared point of view.

Bringing it all together

The use of primarily Google or open-source tools when developing this program was not a coincidence or a decision made from necessity. One of Google’s core operating principles is “eat your own dog food,” meaning, if you have confidence in your products, use them! We really take this to heart in the learning and development space.

We are using the following Google products for this program:

- Internal curation tool: Web-based app that allows users to track their progress through multiple learning items as one cohesive presentation and connect with peers studying the same item.

- CloudCourse (open sourced): We use a Learning Management System developed internally for course listing and discovery.

- Google Sites: Serves as our main program page, where participants could go to learn about the program, launch the training, copy templates, and request individual support.

- Google Forms: Used in our evaluation strategy to conduct pre- and post-learning surveys measuring participant satisfaction (level 1), behavior change (level 3), and business impact (level 4).

- Google Docs Video: Training trailers (teaser videos) we can embed or launch from QR codes for marketing campaigns.

- Google Docs: Houses our design docs, storyboards, flat/screen reader versions, and templates embedded into our Sites pages.

- Internal Tools: We have a user feedback tool to assess page quality, track the scorecard review process, and other requests through an internal support ticketing system.

- Google Analytics: Used to track Web site usage, content popularity, and eLearning hits.

We also used these free or OS tools:

- Moodle (“Noodle”): An internal instance used to deliver quiz questions at the conclusion of the training module.

- Audacity: Audio recording for eLearning modules.

When there truly was no free Google or OS option, we used licensed software and artwork to fill in the gaps (e.g. Articulate, iStockphoto, FlipCams, Camtasia, and Photoshop).

We wanted to include easy-to-use formats for all Googlers, who have a wide variety of preferences for information resources. We wanted to make a training that no one has to ask for accommodation in order to use, consume in their preferred format, or read in their native language. We created a screen-reader-friendly version of the training available from day one. This is also useful because a good portion of our audience rejects Flash-based trainings and wants a text only, searchable version. For our learners who prefer to use Google Translate, this version of the training offers an easy-to-convert document.

“Fuzzy evaluation” and evidence of change

We’re “scrappy” and innovative in our approaches to learning and development, but we’re still highly analytical and data-focused. Our main goal with an evaluation design like this is to tell a story, even if the data is only directional. We can still show the general impact on business of a program in a compelling way.

We designed a comprehensive evaluation strategy for this training program, incorporating all four levels of the Kirkpatrick model and using only Google and open source tools. Our learning evaluation strategies include multiple sources of data to increase validity. Our main goal with an evaluation design like this is to tell a story, even if the data is only directional rather than causal.

Our evaluation methods include:

- Level 1: Satisfaction surveys, administered immediately after the participant completes the online module. (Google Forms)

- Level 2: A fifteen-question quiz, administered at the conclusion of the online module. (Moodle)

- Level 3: Pre- and post-learning participant surveys to assess perceptions and current state practices (frequency and quality), in addition to follow-up assignments that are evaluated against a standardized rating scale. (Google Forms)

- Level 4: Pre- and post-learning participant surveys targeted to assess the perceived value supplier managers are receiving, founded on baseline measures crafted from industry research studies. (Google Forms and Sites)

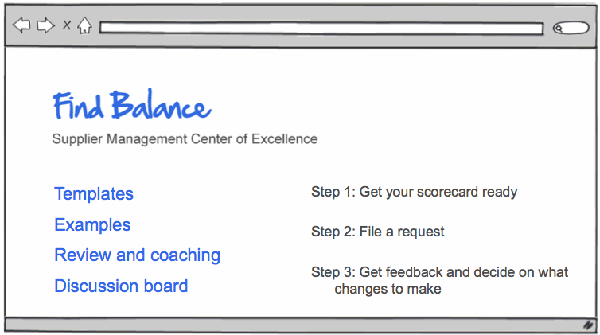

Given our behavior-centered design approach, we wanted to incorporate learning reinforcement and support models into the program to further encourage change. To limit the participant’s time requirement, we layered our reinforcement strategy with our evaluation strategy, using follow-up assignments to reinforce learning and evaluate impact – thus providing value to our learners and increasing participation. The follow-up assignment asked participants to build a “supplier scorecard” of their own, using the methods taught in the training, to help them measure the performance of their suppliers. They then submit their scorecards to the “Supplier Center for Excellence” at Google where a member of the Supplier Management team evaluates their work against a standardized rating scale and provides personalized feedback and coaching. (Figure 2) This approach provides our learners with customized, one-on-one support, while allowing us to measure the frequency with which they perform the desired behavior as well as the quality.

Figure 2. The evaluation and reinforcement strategies come together in a simple scorecard approach.

What we learned

The feedback and interaction we have received from the program has been very useful. For example, we asked in our Level 1 survey, “What have you NOT learned today that you expected to learn during this training?” We are using the answers as clear direction from our learners about gaps that we need to fill in the content published on our community of practice. We also asked questions before and then 90-days after the training about perceived value of supplier relationships, metrics in performance management, and the use of periodic business reviews. This data gives us a picture of how our training program results in things changing.

We had strong responses that the training is a good use of time, is applicable, and is worth recommending. We attribute this success to a great subject-matter-expert partnership, clear business outcomes, specific learning objectives, and snappy design. Learners overwhelmingly thought that the delivery format was well suited to the content. They enjoyed the methods we presented, and even somewhat-experienced supplier managers felt that the training was a good use of an hour. One learner remarked, “I am brand new to handling suppliers, so I learned a lot! I think the main point I will take away is to think more about the supplier/Google relationship going both ways.” We want to have positive, enjoyable partnerships with our suppliers while making sure they continue to deliver quality and value to Google, and this program is supporting that goal.

References

Webb, M., & Hughes, J. (2009, Autumn). Building the case for SRM. CPO Agenda. http://www.cpoagenda.com/previous-articles/autumn-2009/features/building-the-case-for-srm/

Clark, Ruth Colvin. (2010) Evidence-Based Training Methods: A Guide for Training Professionals. Alexandria, VA: American Society for Training & Development.