I attended DevLearn 2019. It was terrific, and I had such a good time there. One theme that I kept hearing over and over was augmented reality. I loved the Thursday keynote with Helen Papagiannis. She spoke about augmented reality as an extension of our real world, one that allows us to visualize, annotate, and tell stories right within what we are seeing. Check out her book Augmented Human. It is a great read.

The conference presentations got me thinking about examples of augmented reality (AR) that I have seen. AR, it seems to me, has enormous potential in the learning space, maybe more than virtual reality (VR). With AR, we are not trying to recreate the real world or even place the learner in an unfamiliar environment. (Don’t get me wrong—there is a place for that.) Still, AR adds additional context and interaction to what the learner is seeing at the moment in the real world, and helps the learner see and explore what could be in their view.

What do you think of when you hear the words “performance support”? We may all have our interpretation, but for me, performance support gives the learner instruction on how to perform actions while on the job and in the moment they need it. That has AR written all over it.

AR in performance support puts learning content in the context of what the learner is seeing. It enhances the real world, and it guides the learner through steps they need to take in the real world and even allows them to explore content they cannot easily access otherwise.

That has enormous potential for the learning and development space and gets me super excited about using AR. In this article, I’ll explore some possible scenarios for using AR in your performance support materials.

AR cards

You might be wondering what I mean by that. Within my company, I teach a lot of software applications. I find that people forget what software to use in certain situations. I have been exploring the creation of physical cards for new employees, along with an app they can download to scan the cards.

These cards contain visual cues that can be trigger experiences within the app. Once the card is triggered, the app provides additional content that the learner can explore. Maybe it is a description of the software or a link to examples of when and where you would use the software. It triggers a reminder of the program just by the card but also helps the learner explore more in-depth once they scan the card.

This is a simple example, but I have seen good results with this. All it takes is the ability to print out the cards, then design and develop the experience around those cards.

Office signs

Imagine you are a new employee trying to learn where things are and who is who. What if you turned imagery around the office, such as signs, into an AR experience? That experience could help people get oriented to places and people.

We created signs similar to Figure 1 for different departments.

Figure 1: Department signs guide new employees by using AR

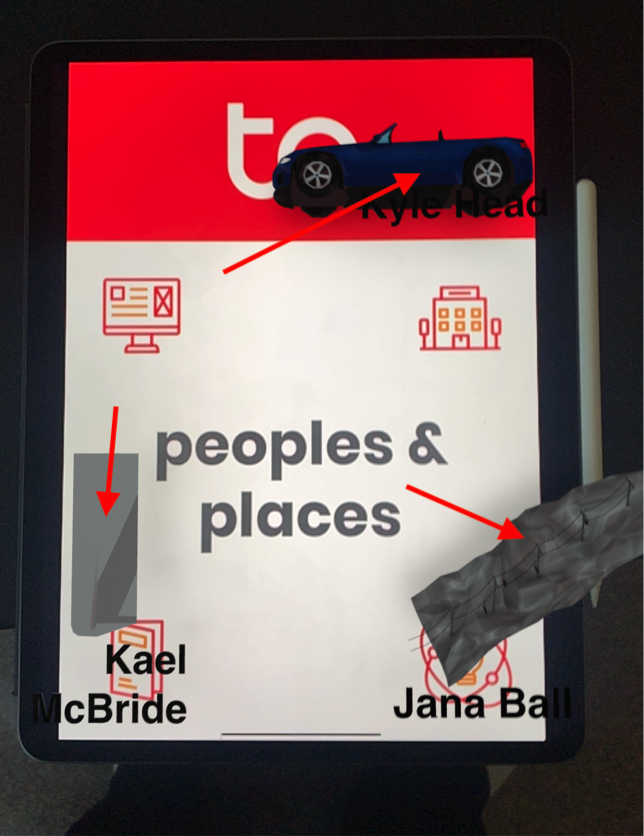

Someone takes an AR app and scans the sign. The app then shows graphic links to content about the people in that office. (Figure 2)

Figure 2: New employees can scan a sign to learn more about the office and the people who work there

In Figure 2, you see graphics and text that the app provides. Each graphic in this case is an image that is related to the person named in the text. Kyle’s graphic is a car, Kael’s is a GameBoy, and Jana’s is a ski slope. Using the app, if I tap or get close to one of the names, it shows additional content about those persons—their interests or personality. (Figure 3) Again pretty straightforward, but it at least allows the person to explore the office on their own and helps them get to know people and places within the office.

Figure 3: The AR app can show relevant information about individuals

Guided maps

You may have control over posting signs around the office, but some of you may not. Another example worth exploring is guided maps. Guided maps also could help someone navigate a new area, new office, or even someone within a warehouse to know where things are located. Imagine even a customer being able to pull open an app and be guided to the product they are trying to find. (Figure 4)

Figure 4: A guided map helps customers find the product they are looking for

Building this one is a little more complicated than recognizing an image and creating the experience around that image. It does take using GeoLocation. Being able to tell where the learner is and then guiding them along the path and even pointing out things along the way.

Step by step scenarios

This one, I think, is the most applicable to performance support. Earlier this year, I attended Realities360, a great conference for anyone wanting to step in and learn how to do AR and VR. (In 2020, Realities360 will be merged with Learning Solutions 2020 in Orlando.) I went to a session of someone talking about using a Hololens. (Figure 5)

Figure 5: A HoloLens AR viewer

Participants were also given a real mechanical part they had never seen before. In the HoloLens, they would see a 3D version of the same part they had in their hand, and they would see an animation step by step of how to disassemble that part. So they would be watching the screen, see how the first step was done, and then say “Step 2” to go onto the next step. So they saw it, and then they performed the action.

Imagine being guided through a task you have never done and seeing exactly how each step is done at the moment. I had witnessed interactions like this before, but this demonstration was more at the moment.

Instruction on physical devices

Another example of AR use is providing instruction on any physical device. Part of AR is the ability to scan and even use machine learning to teach the computer what a physical object is. Scanners are more simplistic, but they don’t always work in every environment unless you feed the device several scans in different scenarios. Machine learning can be fed all the possible situations or orientations of an object and helps you get more accurate readings.

So after you scan the object, you then create the AR scenario around that object. (Figure 6)

Figure 6: AR can provide instruction on any physical device

Imagine that you are a new technician, and you pull out an iPad to review the different components of an object with guides on what to do.

The eLearning Guild's Mark Britz once shared an experience he had while waiting for a technician to come out to fix his internet. He waited six hours for the technician to come, once the technician was there he spent maybe five minutes on the device and had things up and running. Mark works from home, so he was out six hours of work for something simple.

Imagine Mark being able to pull out his device, scan the object, and be walked through some simple troubleshooting steps. That could have saved him a whole lot of time. Or he could see assembly instructions for how to put an object together after you ordered it from a website—a lot of potential for this example.

Seeing inside of objects

This one, I think, is huge for learning. There may be times where we are teaching someone about an object that is either hard to explore or hard to access. AR gives us the ability to place that object right in front of us and allows us to explore it from every angle; you could even build interactivity to opens up the object to reveal more detail.

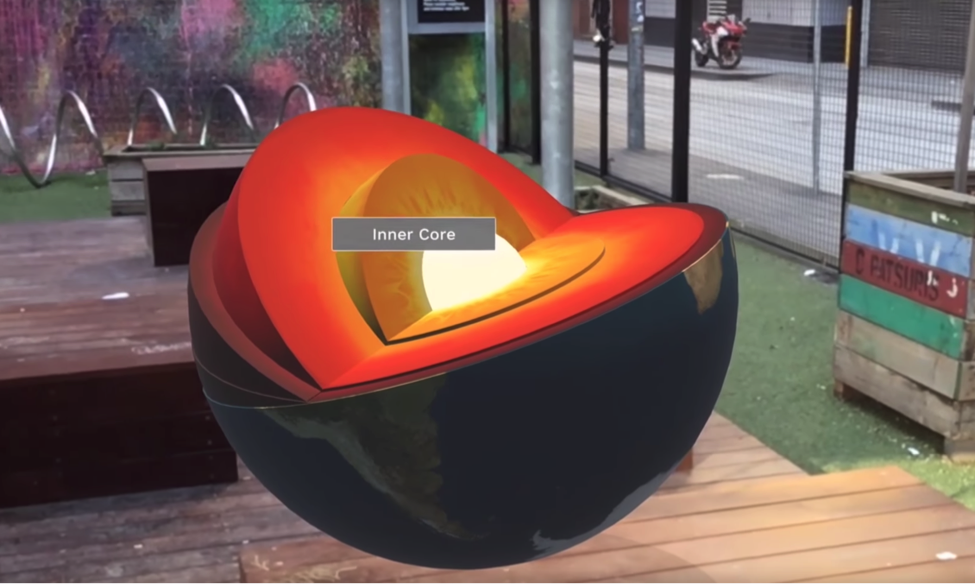

Figure 7: AR can show the inner details of objects

So in Figure 7, the user sees an image of the earth, obviously not to scale, of course, but it does allow the person to explore around the planet. Once they get close, they can trigger the earth to open up and reveal what’s inside.

You could do the same thing for a large piece of equipment or some object that you want to introduce someone to before they get direct contact with it. To me, this is like taking a textbook and bringing it to life.

Conclusion

These examples are just some of the use cases that you could do with AR. There are a lot more possible scenarios that you could do. This kind of development may seem daunting to you, but my suggestion would be to take it step by step. Try one thing out, doing a simple image trigger using a tool like Zappar or Reality Composer. Once you do that you will be hooked.

I think AR is going to be more and more relevant in the future, and it is a space where a lot of learning will occur. What do you think? What are some other examples I did not cover here?