When you are building augmented reality (AR) experiences, there is an important concept to understand: working with anchors. I will explore AR anchor types in this article.

Anchors are one of the most significant differences between augmented reality and virtual reality (VR). In VR, the user does not see the real world so the developer can “place” the learner in any virtual location to begin the experience. With AR, the learner can see the real world in or through the device display. The device and the software platform have to recognize something in the real world and then build the experience around that. Specifically, in AR the experience needs to know where to place the content (hence the name: anchor). The anchor also triggers the experience.

How does this work?

Anchors are objects that AR software can recognize, and they help solve the key problem for AR apps: integrating the real and virtual worlds. AR platforms (such as ARCore, ARKit, and Vuforia) approach this problem in different ways but they all deal with the same tasks: real world detection and tracking.

AR platforms use functionalities based on the sensors in supported devices (mainly iOS and Android but also head-up devices—HUDs—such as Microsoft HoloLens) to support environmental understanding. This included detecting the size and location; position and orientation of surfaces, as well as real-world lighting conditions; and tracking motion. The main sensors that AR uses to detect device location relative to surroundings are cameras. Some platforms also support recognition of different types of visual objects based on shape (box, cylinder, plane), text, and QR code.

This article is primarily concerned with iOS and Android devices, and not with the types of HUDs such as Google Glass where the device functionality involves projecting information onto a flat display but not embedding the content in the real world.

Picking the anchor type

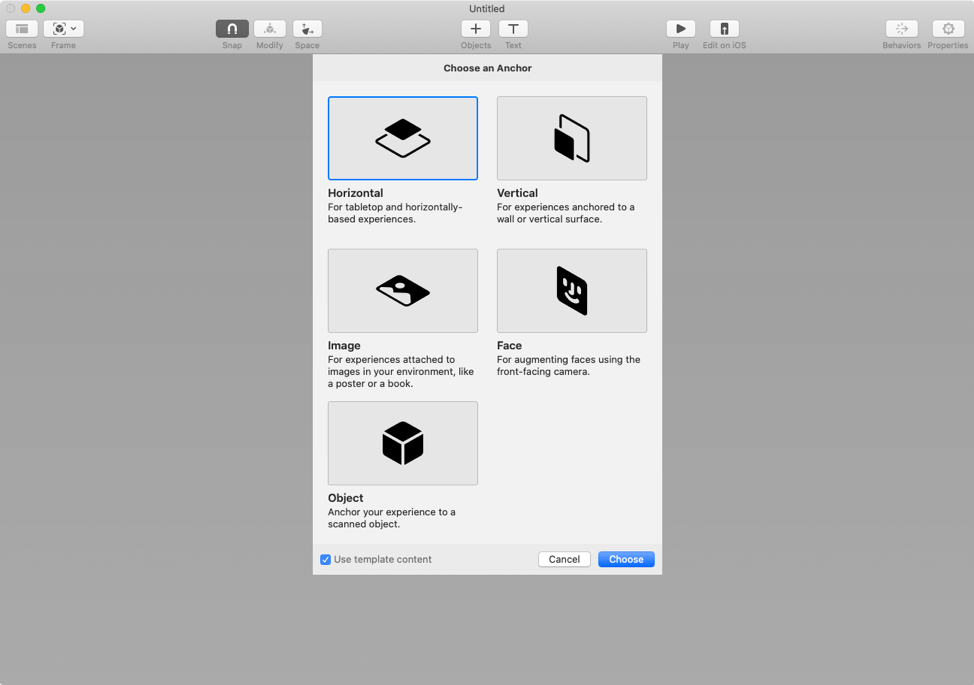

To begin, the AR developer decides which kind of anchor to use. There are several different types of AR anchors. Figure 1 shows the basic types as they appear in Reality Composer, an app available for iOS and macOS that lets developers and graphic artists build out realistic AR experiences. The type of anchors available to the developer depends on the tool in use. This article won’t address the specifics of how to work with each anchor. It will briefly explain each anchor type.

Figure 1: Anchor types in Reality Composer

Figure 1: Anchor types in Reality Composer

Image anchor

The image anchor is one of the most commonly used types. This anchor allows you to associate an image that will be in the real world. The image could be a magazine ad, a printed sign in your office, or a billboard out in the real world. Your image must provide enough detail to be recognizable: distinct patterns, images, logos, text, or anything else that will help your image stand out.

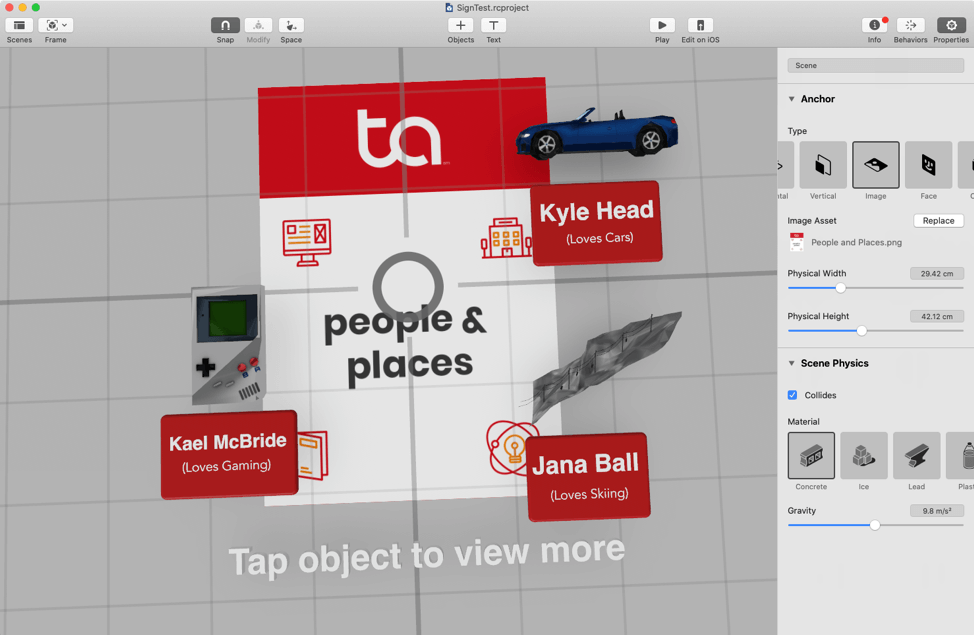

You need a digital version of your image, and you use that in your development environment. (Figure 2) I used Figure 2 in an earlier article, if you’d like to see how the image relates to content. You then use the authoring tool to develop the AR experience around that image.

Figure 2: Image anchor

Figure 2: Image anchor

Once the camera scans the image, the AR experience shows up on or around the image. The experience even moves with the image.

Plane anchors

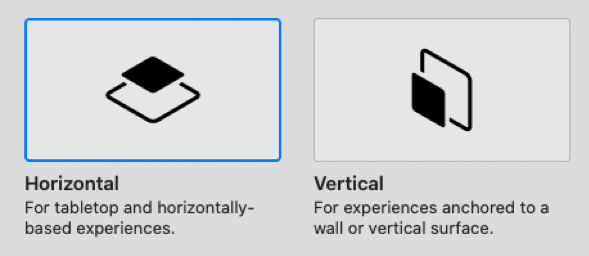

There are really three types of plane anchors: horizontal plane, vertical plane and mid-air plane. (Figure 3) Reality Composer by Apple and Adobe Aero are tools that embed an experience in the horizontal and vertical planes.

Figure 3: Plane anchors

These anchors scan for a plane (ground or wall). Once that plane is found, the camera will build the AR experience on it. This could create a birds-eye view of a world that the user can explore (Figure 4), or it could allow the user to place objects in the real world, as the Ikea and Amazon apps do.

Figure 4: Using a plane anchor

Face anchors

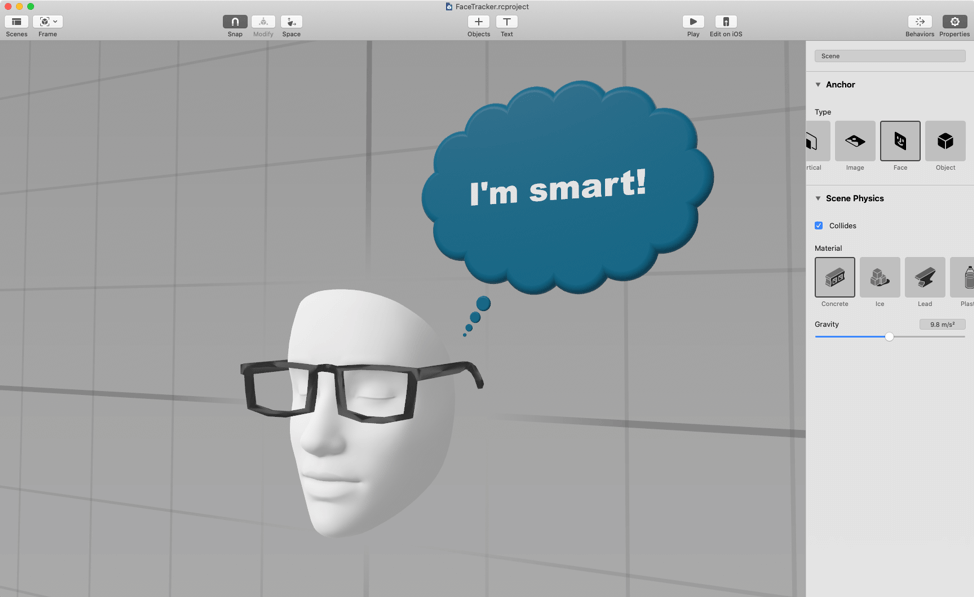

Another common anchor in AR is the face anchor or face tracker. (Figure 5). Reality Composer and Spark AR can use this one. These tools use the person's face as the tracker, to build the experience around the face.

Figure 5: A face anchor

You see this commonly used with social media filters and Apple's MeMoji features. When developing for this kind of feature, Reality Composer just gives you a generic face to build the experience. Spark AR allows you to get more in-depth for different types of faces.

3-D object anchors

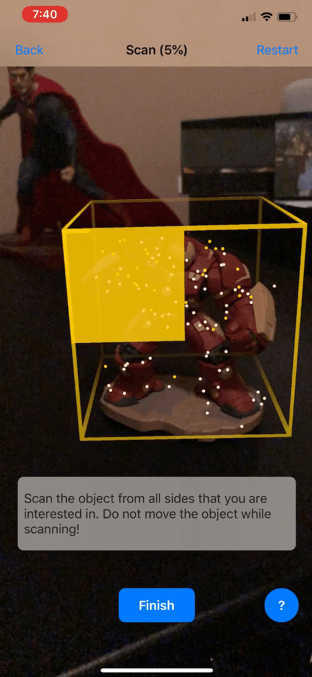

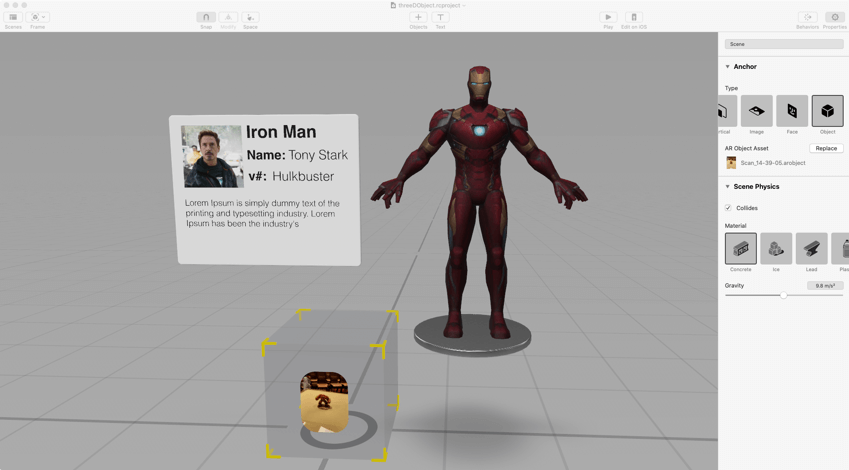

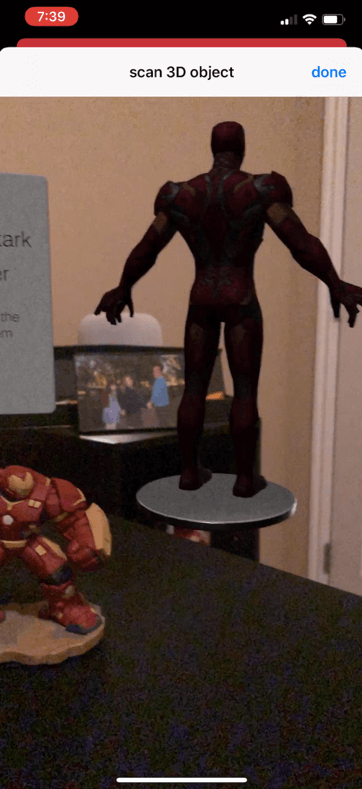

One of my favorite anchors is the object anchor. This anchor allows the platform to detect a 3-D object in the real world and build the experience around that 3-D object. (Figure 6)

Figure 6: Using a 3-D anchor

There are two steps when working with objects. First, scan the object using some type of scanner app. Then import the scan into the tool. The authoring environment will give the developer the dimensions of the object, but placing the experience around the object may require some testing. (Figures 7 and 8)

Figure 7: A 3-D scan imported into a tool

Figure 8: 3-D object placed into a physical environment

One reason I love working with the Reality Composer and Adobe Aero app on my iPad is that I can quickly view the object in real life and edit even while I see the object. This makes placing the AR content easier to do.

Conclusion

Once the anchor is created, the developer uses an authoring tool such as Unity with Vuforia, Reality Composer by Apple, or Adobe Aero to compose and edit what happens around that anchor. The developer determines what is close to the anchor, what is farther away, and what even circles or overlaps the anchor.

There may be other kinds of anchors depending on your tool, but these are the most common ones. Understanding these anchors is the first step in building an experience. This really is the main difference between developing for AR versus developing for VR. You work with the same content but with AR you build your experience around these anchors.

Want more?

If you want to explore more about building AR experiences with Vuforia and Unity, Jeff Batt will teach a workshop at Realities 360 in March of 2020: "P16 - BYOD: Creating Mobile Augmented Reality Experiences with Unity". In this hands-on workshop you'll get started developing mobile apps that leverage both ARKit 2 and ARCore functionality using Unity. You will learn how to build AR learning experiences for iPhones, iPads, and Android devices, reaching millions of users with devices they already have. You will learn how to trigger these augmented learning experiences with print material and 3-D objects and receive resources and sample files that you can use long after the workshop is over.

Jeff will also teach a concurrent session at Realities 360, "514 - BYOD: Prototype and Develop AR Experiences With Your iPad". In this session, you’ll discover how to start developing simple 3-D objects on your iPad with Realities Composer, and how to add interactivity to them. You’ll then explore how to animate and add spatial audio to your AR experiences.

Register for Realities 360 by February 7, 2020 and receive a $150 discount! Registration for Realities 360 and for Jeff's pre-conference workshop are now open.