Now, before we get started, don’t get me started. I completely agree that in a perfect world everything “training” would involve high-end, interactive, emotionally compelling, 3-D simulations tied completely to the employee’s immediate work needs. In a perfect world there would be no multiple-choice quizzes (how much of your job involves taking multiple choice quizzes?) or requirements to memorize facts.

But in our imperfect world of 2010, we have many, many trainers and instructional designers doing their best to design simple online assessments, sometimes because management says to, sometimes because a regulation says to, and sometimes because that’s the best they can do with what they have, right now. So let’s keep pushing toward the future world, but meantime look at ways we can make some simple improvements to what we’re doing now.

Feedback that helps

Most online quizzes culminate in feedback that looks something like this:

Figure 1: Typical online feedback

The problem here is that the feedback doesn’t really do anything to support learning. Rather, it encourages guessing. A better approach? Remember that most learners want to figure things out for themselves. The example below gives a little nudge back toward relevant training content and, it’s hoped, will help the learner understand why the answer is not correct:

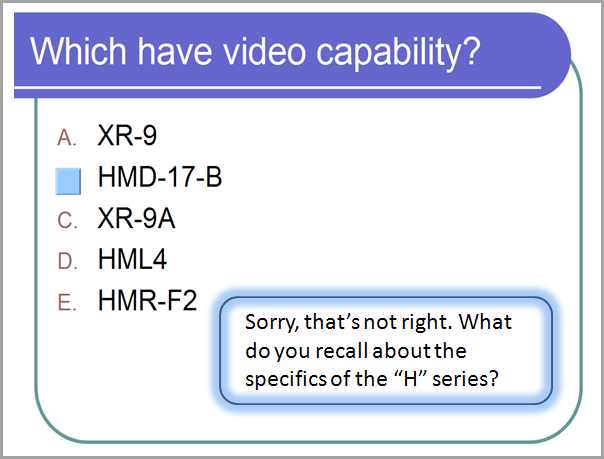

Figure 2: Good

hints help the learner think through to the right answer

(Note: Feedback like “The answer is not C” is not a hint. It still supports guessing. )

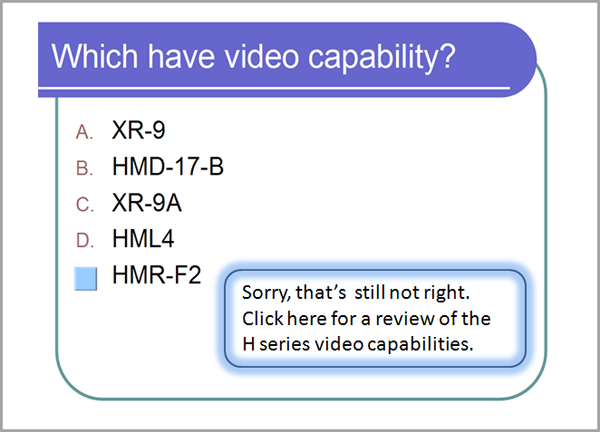

If the learner gets it wrong again? Then we know he’s guessing. Maybe he needs some more help, not just more guesses:

Figure 3: Progressive feedback does not just ask the learner to keep guessing.

Creating this progressive, multilayered type of feedback – with the learner receiving different messages each time – just requires more patience than technical skill. Those working with Web design tools can do it with JavaScript; those using PowerPoint or a slide-based tool can achieve it with a carefully planned branching slide layout.

Feedback that harms

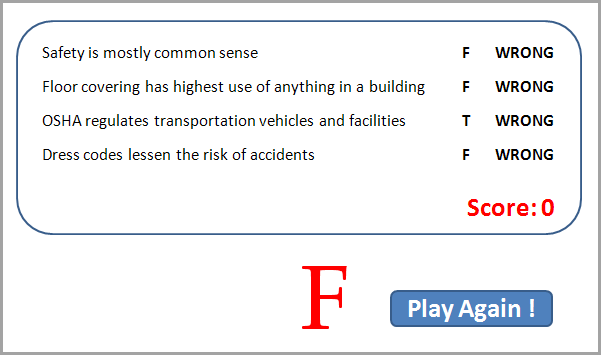

If you don’t take anything away from anything else I say in my whole life: The point of instruction is to build gain, not expose inadequacy. What is the learner supposed to learn from the feedback below? How does it support gain? What does it do toward improving performance? And what are the odds that the person is motivated to “Try again!”? The example below shows intentional overkill from me (although modeled on something real I once saw), but consider the damage this kind of feedback might do. Anything would be better. In crafting feedback, watch out for negative or punitive messages, and the connotations implicit in the example’s bold type, use of “wrong,” zero and F, and the red font. What other “mean feedback” have you experienced with online quizzes and interactions? It isn’t unusual to find big red Xs, slash marks, or annoying honking sounds. Look for ways to guide and support learners, not ridicule them.

Figure 4: Punitive feedback doesn’t help anyone.

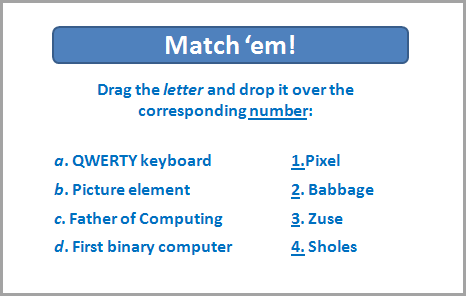

Interactions that make sense

I once had a friend whose boss fell in love with drag-and-drop interactions and instructed her –regardless of content or instructional intent – to insert them at regular intervals into every program she developed. The novelty for learners wore off very quickly, and rarely was there any need for the interaction. It was not informative or engaging, and was no more “interactive” than clicking a next button. It took up time, and as often as not focused on asking the learner to recall facts – random pieces of data culled from the content – that would never be of any real use at work:

Figure 5: What is the point of dragging and dropping words?

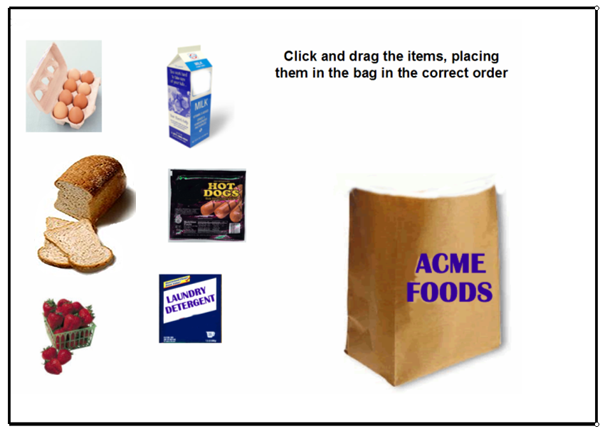

For some content the drag-and-drop interaction makes sense. The example in Figure 6 below shows an interaction that provides close-to-real practice and a chance to consider why a choice matters, before having the new bagger handle real bread and (expensive) strawberries. What are real instances where a worker needs to put this thing in that place? Putting a machine part into place? Putting a piece of data into a spreadsheet? Drag and drop interactions might be good for those.

Figure 6: Practice moves toward the more realistic — without harming any groceries.

Some thought and creativity can help move weak assessments and interactions up to, if not a perfect world, then at least a world more useful for the learner. Keep asking what helps, what supports and what guides, and what supports gain – and leave out things that don’t.

(Figure 6. Bagging image from Bozarth, J. eLearning Solutions on a Shoestring (2005). San Francisco: Pfeiffer.)

Want more?

For more about that perfect world I mentioned in the opening paragraph, see this review of Clark Aldrich’s Complete Guide to Simulations and Serious Games: http://www.learningsolutionsmag.com/articles/467/book-review-the-complete-guide-to-simulations--serious-games-by-clark-aldrich

And see this Learning Solutions article on testing: http://www.learningsolutionsmag.com/articles/590/the-roles-and-design-of-tests-in-online-instruction